Difference between revisions of "Interconnection Network"

Nilayvaish (talk | contribs) (Undo revision 4708 by Honky-tonk (talk) Incorrect change) |

Nilayvaish (talk | contribs) m (Updated the link for Ruby Network Test) |

||

| Line 11: | Line 11: | ||

| − | '''[[ | + | '''[[Ruby Network Test|How to run garnet standalone]]''' |

Revision as of 00:32, 9 July 2013

Contents

How to invoke the network

./build/ALPHA/gem5.debug configs/example/ruby_random_test.py --num-cpus=16 --num-dirs=16 --topology=Mesh --mesh-rows=4

The default network is simple, and the default topology is crossbar.

For running garnet networks:

./build/ALPHA/gem5.debug configs/example/ruby_random_test.py --num-cpus=16 --num-dirs=16 --topology=Mesh --mesh-rows=4 --garnet-network=fixed

Topology

The connection between the various controllers are specified via python files.

- Related Files:

- src/mem/ruby/network/topologies/Crossbar.py

- src/mem/ruby/network/topologies/Mesh.py

- src/mem/ruby/network/topologies/MeshDirCorners.py

- src/mem/ruby/network/topologies/Pt2Pt.py

- src/mem/ruby/network/topologies/Torus.py

- src/mem/ruby/network/Network.py

- src/mem/ruby/network/BasicLink.py

- Topology Descriptions:

- Crossbar: Each controller (L1/L2/Directory) is connected to every other controller via one switch (modeling the crossbar). This can be invoked from command line by --topology=Crossbar.

- Mesh: This topology requires the number of directories to be equal to the number of cpus. The number of routers/switches is equal to the number of cpus in the system. Each router/switch is connected to one L1, one L2 (if present), and one Directory. It can be invoked from command line by --topology=Mesh. The number of rows in the mesh has to be specified by --mesh-rows. This parameter enables the creation of non-symmetrical meshes too.

- MeshDirCorners: This topology requires the number of directories to be equal to 4. number of routers/switches is equal to the number of cpus in the system. Each router/switch is connected to one L1, one L2 (if present). Each corner router/switch is connected to one Directory. It can be invoked from command line by --topology=MeshDirCorners. The number of rows in the mesh has to be specified by --mesh-rows.

- Pt2Pt: Each controller (L1/L2/Directory) is connected to every other controller via a direct link. This can be invoked from command line by --topology=Pt2Pt.

- Torus: This topology requires the number of directories to be equal to the number of cpus. The number of routers/switches is equal to the number of cpus in the system. Each router/switch is connected to one L1, one L2 (if present), and one Directory. It can be invoked from command line by --topology=Torus. The number of rows in the Torus has to be specified by --mesh-rows. This parameter enables the creation of non-symmetrical tori too. By default, this file models a folded torus topology, where the length of each link (including the wrap-around ones) is the same, and approx. equal to twice that of a mesh. The default link latency is thus assumed to be 2-cycles.

- Optional parameters specified by the topology files (defaults in Basic_Link.py):

- latency: latency of traversal within the link.

- weight: weight associated with this link. This parameter is used by the routing table while deciding routes, as explained next in Routing.

- bandwidth_factor: For garnet networks, this is equal to the channel width in bytes. For simple networks, the bandwidth_factor translates to the bandwidth multiplier (simple/SimpleLink.cc) and the individual link bandwidth becomes bandwidth multiplier x endpoint_bandwidth (specified in SimpleNetwork.py).

Routing

Based on the topology, shortest path graph traversals are used to populate routing tables at each router/switch. The default routing algorithm tries to choose the route with minimum number of link traversals. Links can be given weights in the topology files to model different routing algorithms. For example, in Mesh.py, MeshDirCorners.py and Torus.py, Y-direction links are given weights of 2, while X-direction links are given weights of 1, resulting in XY traversals. adaptive_routing (in src/mem/ruby/network/simple/SimpleNetwork.py) can be enabled to make the simple network choose routes based on occupancy of queues at each output port.

Flow-Control and Router Microarchitecture

Ruby supports two network models, Simple and Garnet, which trade-off detailed modeling versus simulation speed respectively.

- Related Files:

- src/mem/ruby/network/Network.py

- src/mem/ruby/network/simple

- src/mem/ruby/network/simple/SimpleNetwork.py

- src/mem/ruby/network/garnet/BaseGarnetNetwork.py

- src/mem/ruby/network/garnet/fixed-pipeline

- src/mem/ruby/network/garnet/GarnetNetwork_d.py

- src/mem/ruby/network/garnet/flexible-pipeline

- src/mem/ruby/network/garnet/flexible-pipeline/GarnetNetwork.py

Configuration and Setup

The default network model in Ruby is the simple network. Garnet fixed-pipeline or flexible-pipeline networks can be enabled by adding --garnet-network=fixed, or --garnet-network=flexible on the command line, respectively.

- Configuration:

Some of the network parameters specified in Network.py are:

- number_of_virtual_networks: This is the maximum number of virtual networks. The actual number of active virtual networks is determined by the protocol.

- control_msg_size: The size of control messages in bytes. Default is 8. m_data_msg_size in Network.cc is set to the block size in bytes + control_msg_size.

Simple Network

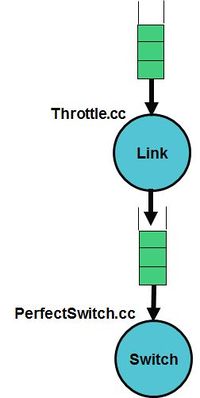

The simple network models hop-by-hop network traversal, but abstracts out detailed modeling within the switches. The switches are modeled in simple/PerfectSwitch.cc while the links are modeled in simple/Throttle.cc. The flow-control is implemented by monitoring the available buffers and available bandwidth in output links before sending.

- Configuration:

Simple network uses the generic network parameters in Network.py. Additional parameters are specified in simple/SimpleNetwork.py:

- buffer_size: Size of buffers at each switch input and output ports. A value of 0 implies infinite buffering.

- endpoint_bandwidth: Bandwidth at the end points of the network in 1000th of byte.

- adaptive_routing: This enables adaptive routing based on occupancy of output buffers.

Garnet Networks

Garnet is a detailed interconnection network model inside gem5. It consists of a detailed fixed-pipeline model, and an approximate flexible-pipeline model.

The fixed-pipeline model is intended for low-level interconnection network evaluations and models the detailed micro-architectural features of a 5-stage Virtual Channel router with credit-based flow-control. Researchers interested in investigating different network microarchitectures can readily modify the modeled microarchitecture and pipeline. Also, for system level evaluations that are not concerned with the detailed network characteristics, this model provides an accurate network model and should be used as the default model.

The flexible-pipeline model is intended to provide a reasonable abstraction of all interconnection network models, while allowing the router pipeline depth to be flexibly adjusted. A router pipeline might range from a single cycle to several cycles. For evaluations that wish to easily change the router pipeline depth, the flexible-pipeline model provides a neat abstraction that can be used.

If your use of Garnet contributes to a published paper, please cite the research paper which can be found here.

- Configuration

Garnet uses the generic network parameters in Network.py. Additional parameters are specified in garnet/BaseGarnetNetwork.py:

- ni_flit_size: flit size in bytes. Flits are the granularity at which information is sent from one router to the other. Default is 16 (=> 128 bits). [This default value of 16 results in control messages fitting within 1 flit, and data messages fitting within 5 flits]. Garnet requires the ni_flit_size to be the same as the bandwidth_factor (in network/BasicLink.py) as it does not model variable bandwidth within the network.

- vcs_per_vnet: number of virtual channels (VC) per virtual network. Default is 4.

The following parameters in garnet/fixed-pipeline/GarnetNetwork_d.py are only valid for fixed-pipeline:

- buffers_per_data_vc: number of flit-buffers per VC in the data message class. Since data messages occupy 5 flits, this value can lie between 1-5. Default is 4.

- buffers_per_ctrl_vc: number of flit-buffers per VC in the control message class. Since control messages occupy 1 flit, and a VC can only hold one message at a time, this value has to be 1. Default is 1.

The following parameters in garnet/flexible-pipeline/GarnetNetwork.py are only valid for flexible-pipeline:

- buffer_size: Size of buffers per VC. A value of 0 implies infinite buffering.

- number_of_pipe_stages: number of pipeline stages in each router in the flexible-pipeline model. Default is 4.

- Additional features

- Routing: Currently, garnet only models deterministic routing using the routing tables described earlier.

- Modeling variable link bandwidth: The bandwidth_factor specifies the link bandwidth as the number of bytes per cycle per network link. ni_flit_size has to be same as this value. Links which have low bandwidth can be modeled by specifying a longer latency across them in the topology file (as explained earlier).

- Multicast messages: The network modeled does not have hardware multi-cast support within the network. A multi-cast message gets broken into multiple uni-cast messages at the interface to the network.

- Garnet fixed-pipeline network

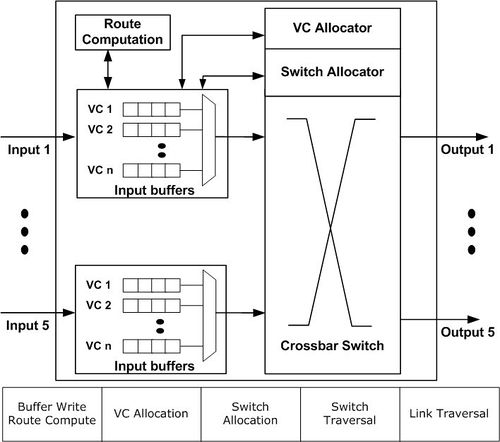

The garnet fixed-pipeline models a classic 5-stage Virtual Channel router. The 5-stages are:

- Buffer Write (BW) + Route Compute (RC): The incoming flit gets buffered and computes its output port.

- VC Allocation (VA): All buffered flits allocate for VCs at the next routers. [The allocation occurs in a separable manner: First, each input VC chooses one output VC, choosing input arbiters, and places a request for it. Then, each output VC breaks conflicts via output arbiters]. All arbiters in ordered virtual networks are queueing to maintain point-to-point ordering. All other arbiters are round-robin.

- Switch Allocation (SA): All buffered flits try to reserve the switch ports for the next cycle. [The allocation occurs in a separable manner: First, each input chooses one input VC, using input arbiters, which places a switch request. Then, each output port breaks conflicts via output arbiters]. All arbiters in ordered virtual networks are queueing to maintain point-to-point ordering. All other arbiters are round-robin.

- Switch Traversal (ST): Flits that won SA traverse the crossbar switch.

- Link Traversal (LT): Flits from the crossbar traverse links to reach the next routers.

The flow-control implemented is credit-based.

- Garnet flexible-pipeline network

The garnet flexible-pipeline model should be used when one desires a router pipeline different than 5 stages (the 5 stages include the link traversal stage). All the components of a router (buffers, VC and switch allocators, switch etc) are modeled similar to the fixed-pipeline design, but the pipeline depth is not modeled, and comes as an input parameter number_of_pipe_stages. The flow-control is implemented by monitoring the availability of buffers at each output port before sending.